CN114693873A - A point cloud completion method based on dynamic graph convolution and attention mechanism - Google Patents

A point cloud completion method based on dynamic graph convolution and attention mechanism Download PDFInfo

- Publication number

- CN114693873A CN114693873A CN202210315804.5A CN202210315804A CN114693873A CN 114693873 A CN114693873 A CN 114693873A CN 202210315804 A CN202210315804 A CN 202210315804A CN 114693873 A CN114693873 A CN 114693873A

- Authority

- CN

- China

- Prior art keywords

- feature

- point cloud

- point

- features

- graph convolution

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T17/00—Three-dimensional [3D] modelling for computer graphics

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/25—Fusion techniques

- G06F18/253—Fusion techniques of extracted features

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- General Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- Life Sciences & Earth Sciences (AREA)

- Artificial Intelligence (AREA)

- Software Systems (AREA)

- Evolutionary Computation (AREA)

- Computer Vision & Pattern Recognition (AREA)

- General Health & Medical Sciences (AREA)

- Bioinformatics & Computational Biology (AREA)

- Health & Medical Sciences (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Computational Linguistics (AREA)

- Evolutionary Biology (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Computer Graphics (AREA)

- Geometry (AREA)

- Processing Or Creating Images (AREA)

- Image Analysis (AREA)

Abstract

本发明介绍了一种基于动态图卷积和注意力机制的点云补全方法,其包括:步骤S1、使用动态图卷积技术进行特征提取;步骤S2、结合注意力池化方法和最大池化方法的进行特征聚合;步骤S3、局部缺失空间目标特征补全与重建。本申请通过定义点云邻域和更新动态邻域图,然后结合动态邻域图进行点云的更新和密集连接;并使用注意力池化和最大池化结合的多通道方式进行点云的特征聚合,高效完成了缺失点云的补全,最大程度地保留和恢复了输入点云的细节和几何结构;而且本申请提出了一个一阶段模式的网络模型,融合了点云的逐点特征和全局几何特征,在特征空间上对点云进行补全,对特征进行扩张与细化,以此重建完整点云,进而直接输出完整的点云。

The present invention introduces a point cloud completion method based on dynamic graph convolution and attention mechanism, which includes: step S1, using dynamic graph convolution technology for feature extraction; step S2, combining attention pooling method and maximum pooling The feature aggregation method is performed by the method; step S3, the local missing spatial target feature is completed and reconstructed. This application defines the point cloud neighborhood and updates the dynamic neighborhood map, and then combines the dynamic neighborhood map to update and densely connect the point cloud; and uses a multi-channel method combining attention pooling and max pooling to characterize the point cloud aggregation, efficiently completes the completion of the missing point cloud, and preserves and restores the details and geometric structure of the input point cloud to the greatest extent; and this application proposes a network model of one-stage mode, which integrates the point-by-point features and The global geometric feature complements the point cloud in the feature space, expands and refines the feature, and reconstructs the complete point cloud, and then directly outputs the complete point cloud.

Description

技术领域technical field

本发明属于模型构建设计领域,具体涉及一种基于动态图卷积和注意力机制的点云补全方法。The invention belongs to the field of model construction and design, in particular to a point cloud completion method based on dynamic graph convolution and attention mechanism.

背景技术Background technique

在现实场景中,利用激光雷达等3D扫描设备获得的点云,因为传感器分辨率、视角的限制,及物体结构间或物体间遮挡的影响,采集的空间目标点云存在稀疏和缺失等问题,为后续点云更加深层次的应用带来了一定的困难。因此,点云形状补全是解决如何从局部观测点云中恢复完整点云的问题,对于任意给定的具有结构缺失的点云,能够得到对应的形状完整的点云,该任务是许多下游任务的基础,如形状分类、分割等,也是后续应用中不可或缺的一个操作环节。In the real scene, the point cloud obtained by 3D scanning equipment such as lidar, due to the limitation of sensor resolution, viewing angle, and the influence of the occlusion between object structures or objects, the collected space target point cloud has problems such as sparseness and lack. Subsequent application of point clouds at a deeper level brings certain difficulties. Therefore, point cloud shape completion is to solve the problem of how to recover the complete point cloud from the locally observed point cloud. For any given point cloud with missing structure, the corresponding point cloud with complete shape can be obtained. This task is a lot of downstream The basis of tasks, such as shape classification, segmentation, etc., is also an indispensable operation link in subsequent applications.

中国专利CN112614071A公布了一种基于自注意力的多样点云补全方法和装置,涉及计算机三维点云补全和深度学习技术领域,其中,方法包括:获取点云数据,对点云数据进行处理,获取输入点代理序列;对点代理序列进行编码,获取点编码向量,对点编码向量进行解码,获取预测点代理;将预测点代理输入多层感知器,获取预测点中心,并在预测点中心的基础上恢复完整点云数据。由此,将点云处理成为点代理序列,并采用编码器以及解码器来构建点云不同点之间的长程关系实现点云重建。但是其利用逐点的共享多层感知机提取点云的特征,没有充分利用点之间的局部结构信息,在补全任务中,输入点云所具有的缺失性和稀疏性使得获取有用的邻域信息变得困难。Chinese patent CN112614071A discloses a self-attention-based multiple point cloud completion method and device, which relates to the technical field of computer three-dimensional point cloud completion and deep learning, wherein the method includes: acquiring point cloud data, processing the point cloud data , obtain the input point proxy sequence; encode the point proxy sequence, obtain the point encoding vector, decode the point encoding vector, and obtain the predicted point proxy; input the predicted point proxy into the multilayer perceptron to obtain the predicted point center, and at the predicted point The complete point cloud data is recovered on the basis of the center. Therefore, the point cloud is processed into a point proxy sequence, and the encoder and decoder are used to construct the long-range relationship between different points of the point cloud to achieve point cloud reconstruction. However, it uses point-by-point shared multi-layer perceptron to extract the features of point clouds, and does not make full use of the local structural information between points. Domain information becomes difficult.

中国专利CN114004871A公布了一种基于点云补全的点云配准方法及系统,对源点云和目标点云执行采样,分别提取特征;利用注意机制融合两个点云的特征,使两个点云的语义信息相互补全;提取补全后的点云的高维特征,根据高维特征学习对方点云的位置信息,确定源点云中的每个点在目标点云中的对应点;根据对应点,利用奇异值分解获得当前刚性变换参数,利用当前刚性变化参数实现源点云向目标点云的配准。其不需要对原始点云大量删减,且能够补全缺失的点云信息,实现高效、准确的配准。但是其对于缺失部分的补全结果存在细节模糊、形状扭曲等问题。而且为了补全效果的提升,基于多阶段的网络结构越来越复杂。而点云补全作为一个上游任务,应该致力于设计更加高效的网络结构。Chinese patent CN114004871A discloses a point cloud registration method and system based on point cloud completion. The source point cloud and the target point cloud are sampled to extract features respectively; The semantic information of the point cloud complements each other; extract the high-dimensional features of the completed point cloud, learn the position information of the opposite point cloud according to the high-dimensional features, and determine the corresponding point of each point in the source point cloud in the target point cloud ; According to the corresponding points, the current rigid transformation parameters are obtained by singular value decomposition, and the registration of the source point cloud to the target point cloud is realized by using the current rigid change parameters. It does not need to delete a lot of the original point cloud, and can complete the missing point cloud information to achieve efficient and accurate registration. However, there are problems such as blurred details and distorted shapes in the completion results of the missing parts. Moreover, in order to improve the completion effect, the network structure based on multi-stage becomes more and more complex. As an upstream task, point cloud completion should be devoted to designing a more efficient network structure.

现有的补全网络大多数利用逐点的共享多层感知机制提取点云的特征,没有充分利用点之间的局部结构信息,后来虽然有一些研究利用球查询等方法提取多尺度和多分辨率的点邻域信息,但是这种基于欧式空间的邻域点选取方法对于点的分布与密度十分敏感,在补全任务中,输入点云所具有的缺失性和稀疏性使得获取有用的邻域信息变得困难。而且在特征提取编码器部分,由于仅仅使用最大池化操作也存在一定的信息丢失风险。Most of the existing completion networks use the point-by-point shared multi-layer perception mechanism to extract the features of point clouds, and do not fully utilize the local structural information between points. Later, although some researches used methods such as ball query to extract multi-scale and multi-resolution. However, this Euclidean space-based neighborhood point selection method is very sensitive to the distribution and density of points. In the completion task, the lack and sparseness of the input point cloud make it possible to obtain useful neighbors. Domain information becomes difficult. Moreover, in the feature extraction encoder part, there is a certain risk of information loss due to only using the maximum pooling operation.

发明内容SUMMARY OF THE INVENTION

为解决上述问题,实现充分利用可观测部分点云的先验信息,学习相关结构属性的同时,在保留输入点云细节特征时也能够生成空间物体完整且精细的形状结构,避免仅仅使用最大池化操作导致信息丢失。In order to solve the above problems, it is possible to make full use of the prior information of the observable part of the point cloud, while learning the relevant structural attributes, while retaining the detailed characteristics of the input point cloud, it can also generate a complete and fine shape structure of the spatial object, avoiding the use of only the maximum pooling. The operation results in the loss of information.

为达到上述效果,本发明设计了一种基于动态图卷积和注意力机制的点云补全方法。In order to achieve the above effects, the present invention designs a point cloud completion method based on dynamic graph convolution and attention mechanism.

一种基于动态图卷积和注意力机制的点云补全方法,其包括:A point cloud completion method based on dynamic graph convolution and attention mechanism, which includes:

步骤S1、使用动态图卷积技术进行特征提取;Step S1, using dynamic graph convolution technology for feature extraction;

步骤S2、结合注意力池化方法和最大池化方法的进行特征聚合;Step S2, combining the attention pooling method and the maximum pooling method to perform feature aggregation;

步骤S3、局部缺失空间目标特征补全与重建。Step S3: Completion and reconstruction of local missing spatial target features.

优选地,所述步骤S1中使用动态图卷积技术进行特征提取的方法包括:Preferably, the method for feature extraction using dynamic graph convolution technology in the step S1 includes:

步骤S11、定义邻域并更新动态邻域图;Step S11, define the neighborhood and update the dynamic neighborhood graph;

步骤S12、密集连接。Step S12, dense connection.

优选地,所述步骤S11中定义邻域并更新动态邻域图的具体方法为使用最近邻算法k-NN在特征空间中构建每个点的局部图,以聚合局部和结构感知的上下文特征;对于点云中的每个点xi,根据它们在特征空间中的接近度,选择k个邻居节点xj={x1,x2,…,xk},结合特征嵌入xi和xj-xi,得到边缘特征eij,可以表示为:Preferably, the specific method of defining the neighborhood and updating the dynamic neighborhood graph in the step S11 is to use the nearest neighbor algorithm k-NN to construct a local graph of each point in the feature space to aggregate local and structure-aware contextual features; For each point x i in the point cloud, select k neighbor nodes x j ={x 1 ,x 2 ,...,x k } according to their proximity in the feature space, combining the feature embeddings x i and x j -x i , get the edge feature e ij , which can be expressed as:

其中Ni表示点i的邻域,表示连接操作。where Ni represents the neighborhood of point i , Represents a connect operation.

优选地,所述步骤S12中密集连接的具体方法为使用动态图卷积模块,将输入特征转换为具有较小特征维数的新特征嵌入,并通过转换后的特征动态构建邻域图;然后将构建的邻域图的边缘特征传递给共享多层感知机层;为了保留低维层的信息,将每一层的输出作为后续所有层的输入,表示为:Preferably, the specific method for dense connection in the step S12 is to use a dynamic graph convolution module to convert the input features into new feature embeddings with smaller feature dimensions, and dynamically construct a neighborhood graph through the converted features; then The edge features of the constructed neighborhood graph are passed to the shared multi-layer perceptron layer; in order to retain the information of the low-dimensional layer, the output of each layer is used as the input of all subsequent layers, expressed as:

其中是第l层点xi的输出特征,是第l-1层点xi的输出特征,hθ(·)表示共享多层感知机层,表示连接操作;最后,我们使用最大池化来获取在每个局部图中具有排列不变性的聚合局部特征;in is the output feature of the point xi of the lth layer, is the output feature of the point x i of the l-1th layer, h θ ( ) represents the shared multi-layer perceptron layer, represents the join operation; finally, we use max pooling to obtain aggregated local features with permutation invariance in each local graph;

在子单元之外,每个子单元的输出也通过跳跃连接操作传递给后续每个子单元作为其输入,可以记为:In addition to the subunits, the output of each subunit is also passed to each subsequent subunit as its input through the skip connection operation, which can be written as:

其中Fi表示第i个子单元的输出特征,Ei(·)表示第i个子单元,Fin表示输入特征,表示连接操作。where F i represents the output feature of the ith subunit, E i ( ) represents the ith subunit, F in represents the input feature, Represents a connect operation.

优选地,所述S2步骤中特征聚合方法为:注意力池化和最大池化结合提取全局特征,并与逐点局部特征Fp聚合生成Fa。Preferably, the feature aggregation method in the step S2 is as follows: attention pooling and max pooling are combined to extract global features, and aggregated with point-by-point local features F p to generate Fa .

优选地,所述特征聚合为将逐点特征Fp和全局特征聚合作为下一阶段的输入,生成细粒度的形状的同时保留输入点云的原始特征。Preferably, the feature aggregation is to use the point-by-point feature F p and the global feature aggregation as the input of the next stage to generate fine-grained shapes while retaining the original features of the input point cloud.

优选地,所述注意力池化方法为计算输入特征的每个元素的注意力得分ai:Preferably, the attention pooling method is to calculate the attention score a i of each element of the input feature:

其中FC(·)表示全连接层,表示第i个点的输入特征;where FC( ) represents the fully connected layer, Represents the input feature of the i-th point;

然后将每个元素乘以相应的分数并相加得到聚合特征fout表示为:Each element is then multiplied by the corresponding score and added to get the aggregated feature fout expressed as:

其中fout表示输出特征,hθ(·)表示共享多层感知机层。where f out represents the output features and h θ ( ) represents the shared multilayer perceptron layer.

优选地,所述S3步骤中特征补全与重建方法为:使用特征补全模块对特征进行扩展和细化,并以此重建完整点云的坐标。Preferably, the feature completion and reconstruction method in the step S3 is: using the feature completion module to expand and refine the features, and reconstruct the coordinates of the complete point cloud.

优选地,所述特征补全模块为层次残差结构。Preferably, the feature completion module is a hierarchical residual structure.

优选地,所述S3步骤中特征补全与重建方法:Preferably, the feature completion and reconstruction method in the S3 step:

S101、首先通过共享多层感知机得到大小为N×C的特征矩阵L0,C表示特征维度,N表示个数;S101. First, a feature matrix L 0 with a size of N×C is obtained by sharing a multi-layer perceptron, where C represents the feature dimension, and N represents the number;

S102、然后对变换后的特征矩阵复制r倍以获得新的特征图;S102, then copy r times to the transformed feature matrix to obtain a new feature map;

S103、生成r个不同的二维网格,包含m个网格点,然后将其扩展为重复特征的大小,将网格点的坐标与重复特征进行拼接;S103, generating r different two-dimensional grids, including m grid points, and then expanding them to the size of the repeated features, and splicing the coordinates of the grid points with the repeated features;

S104、通过自注意力机制单元和共享多层感知机得到大小为rN3×C的扩张特征矩阵H0;S104, obtaining an expanded feature matrix H 0 with a size of rN 3 ×C through the self-attention mechanism unit and the shared multilayer perceptron;

S105、对特征H0进行重新塑形,恢复到与L0维度相同的特征矩阵L1,对L1和L0作差,得到残差△L;S105, reshape the feature H 0 to restore the feature matrix L 1 with the same dimension as L 0 , and make a difference between L 1 and L 0 to obtain the residual ΔL;

S106、将△L再通过S102-S104的步骤获得扩张残差特征H1,最终将H1与H0相加得到扩张特征Hout;S106, obtaining the expanded residual feature H 1 through the steps of S102-S104 by ΔL, and finally adding H 1 and H 0 to obtain the expanded feature H out ;

S107、最后通过参数为[C,128,64,3]的共享多层感知机得到大小为rN3×3的完整点云。S107. Finally, a complete point cloud with a size of rN 3 × 3 is obtained through a shared multilayer perceptron with parameters [C, 128, 64, 3].

本申请的优点和效果如下:The advantages and effects of the present application are as follows:

1、本申请在特征空间中定义每个点的邻域,即根据特征图的相似性来寻找邻近点,并且在每一层后动态更新邻域图,进而获取点云结构与内容感知的上下文信息。1. This application defines the neighborhood of each point in the feature space, that is, finds adjacent points according to the similarity of the feature map, and dynamically updates the neighborhood map after each layer, thereby obtaining the context of the point cloud structure and content perception information.

2、本申请在使用最大池化的同时,结合了注意力池化,用于关注一些特定的显著结构信息,并提出联合最大池化和注意力池化操作的多通道方式,进而提取点云信息更加丰富的全局几何特征。2. While using max pooling, this application combines attention pooling to focus on some specific salient structural information, and proposes a multi-channel method of combining max pooling and attention pooling operations to extract point clouds More informative global geometric features.

3、本申请提出了结合图卷积和注意力机制的轻量级点云补全网络,高效生成局部缺失点云的补全结果,最大程度地保留和恢复了输入点云的细节和几何结构。3. This application proposes a lightweight point cloud completion network combining graph convolution and attention mechanisms, which efficiently generates the completion results of locally missing point clouds, and preserves and restores the details and geometric structure of the input point cloud to the greatest extent. .

4、本申请提出了一个一阶段模式的网络模型,并非使用多步骤的补全策略,能够直接输出完整的点云,与以往的方法相比,我们的方法并非通过直接解码经池化操作压缩后的特征嵌入来生成完整点云,而是融合逐点特征和全局几何特征,在特征空间对点云进行补全,并对特征进行扩张与细化,以此重建完整点云。4. This application proposes a network model of one-stage mode, instead of using a multi-step completion strategy, it can directly output a complete point cloud. Compared with previous methods, our method does not compress through direct decoding and pooling operation. Instead, point-by-point features and global geometric features are fused to complete the point cloud in the feature space, and the features are expanded and refined to reconstruct the complete point cloud.

上述说明仅是本申请技术方案的概述,为了能够更清楚了解本申请的技术手段,从而可依照说明书的内容予以实施,并且为了让本申请的上述和其他目的、特征和优点能够更明显易懂,以下以本申请的较佳实施例并配合附图详细说明如后。The above description is only an overview of the technical solution of the present application, in order to be able to understand the technical means of the present application more clearly, so that it can be implemented according to the contents of the description, and in order to make the above and other purposes, features and advantages of the present application more obvious and easy to understand , the preferred embodiments of the present application and the accompanying drawings are described in detail below.

根据下文结合附图对本申请具体实施例的详细描述,本领域技术人员将会更加明了本申请的上述及其他目的、优点和特征。The above and other objects, advantages and features of the present application will be more apparent to those skilled in the art from the following detailed description of the specific embodiments of the present application in conjunction with the accompanying drawings.

附图说明Description of drawings

为了更清楚地说明本申请实施例或现有技术中的技术方案,下面将对实施例或现有技术描述中所需要使用的附图作简单地介绍,显而易见地,下面描述中的附图是本申请的一些实施例,对于本领域普通技术人员来讲,在不付出创造性劳动的前提下,还可以根据这些附图获得其他的附图。在所有附图中,类似的元件或部分一般由类似的附图标记标识。附图中,各元件或部分并不一定按照实际的比例绘制。In order to more clearly illustrate the embodiments of the present application or the technical solutions in the prior art, the following briefly introduces the accompanying drawings that need to be used in the description of the embodiments or the prior art. Obviously, the drawings in the following description are For some embodiments of the present application, for those of ordinary skill in the art, other drawings can also be obtained according to these drawings without any creative effort. Similar elements or parts are generally identified by similar reference numerals throughout the drawings. In the drawings, each element or section is not necessarily drawn to actual scale.

图1为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的结合图卷积和注意力机制的深度学习模型架构;1 is a deep learning model architecture combining graph convolution and attention mechanism of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention;

图2为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的动态图卷积示意图;2 is a schematic diagram of dynamic graph convolution of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention;

图3为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的特征补全与坐标重建示意图;3 is a schematic diagram of feature completion and coordinate reconstruction of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention;

图4为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的效果对比图。FIG. 4 is a comparison diagram of the effect of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention.

具体实施方式Detailed ways

为使本申请实施例的目的、技术方案和优点更加清楚,下面将结合本申请实施例中的附图,对本申请实施例中的技术方案进行清楚、完整地描述,显然,所描述的实施例是本申请一部分实施例,而不是全部的实施例。在下面的描述中,提供诸如具体的配置和组件的特定细节仅仅是为了帮助全面理解本申请的实施例。因此,本领域技术人员应该清楚,可以对这里描述的实施例进行各种改变和修改而不脱离本申请的范围和精神。另外,为了清楚和简洁,实施例中省略了对已知功能和构造的描述。In order to make the purposes, technical solutions and advantages of the embodiments of the present application clearer, the technical solutions in the embodiments of the present application will be described clearly and completely below with reference to the drawings in the embodiments of the present application. Obviously, the described embodiments It is a part of the embodiments of the present application, but not all of the embodiments. In the following description, specific details such as specific configurations and components are provided merely to assist in a comprehensive understanding of embodiments of the present application. Accordingly, it should be apparent to those skilled in the art that various changes and modifications of the embodiments described herein can be made without departing from the scope and spirit of the present application. Also, descriptions of well-known functions and constructions are omitted in the embodiments for clarity and conciseness.

应该理解,说明书通篇中提到的“一个实施例”或“本实施例”意味着与实施例有关的特定特征、结构或特性包括在本申请的至少一个实施例中。因此,在整个说明书各处出现的“一个实施例”或“本实施例”未必一定指相同的实施例。此外,这些特定的特征、结构或特性可以任意适合的方式结合在一个或多个实施例中。It should be understood that reference throughout the specification to "one embodiment" or "the present embodiment" means that a particular feature, structure or characteristic associated with the embodiment is included in at least one embodiment of the present application. Thus, appearances of "one embodiment" or "this embodiment" in various places throughout this specification are not necessarily necessarily referring to the same embodiment. Furthermore, the particular features, structures or characteristics may be combined in any suitable manner in one or more embodiments.

此外,本申请可以在不同例子中重复参考数字和/或字母。这种重复是为了简化和清楚的目的,其本身并不指示所讨论各种实施例和/或设置之间的关系。Furthermore, this application may repeat reference numerals and/or letters in different instances. This repetition is for the purpose of simplicity and clarity and does not in itself indicate a relationship between the various embodiments and/or arrangements discussed.

本文中术语“和/或”,仅仅是一种描述关联对象的关联关系,表示可以存在三种关系,例如,A和/或B,可以表示:单独存在A,单独存在B,同时存在A和B三种情况,本文中术语“/和”是描述另一种关联对象关系,表示可以存在两种关系,例如,A/和B,可以表示:单独存在A,单独存在A和B两种情况,另外,本文中字符“/”,一般表示前后关联对象是一种“或”关系。The term "and/or" in this article is only an association relationship to describe associated objects, indicating that there can be three kinds of relationships, for example, A and/or B, which can mean: A alone exists, B alone exists, and A and B exist simultaneously. There are three cases of B. In this article, the term "/and" is to describe another related object relationship, which means that there can be two relationships, for example, A/ and B, which can mean that A exists alone, and A and B exist alone. , In addition, the character "/" in this text generally indicates that the related objects are an "or" relationship.

本文中术语“至少一种”,仅仅是一种描述关联对象的关联关系,表示可以存在三种关系,例如,A和B的至少一种,可以表示:单独存在A,同时存在A和B,单独存在B这三种情况。The term "at least one" in this paper is only an association relationship to describe the associated objects, which means that there can be three kinds of relationships, for example, at least one of A and B, it can mean that A exists alone, A and B exist at the same time, There are three cases of B alone.

还需要说明的是,在本文中,诸如第一和第二等之类的关系术语仅仅用来将一个实体或者操作与另一个实体或操作区分开来,而不一定要求或者暗示这些实体或操作之间存在任何这种实际的关系或者顺序。而且,术语“包括”、“包含”或者其任何其他变体意在涵盖非排他性的包含。It should also be noted that in this document, relational terms such as first and second are used only to distinguish one entity or operation from another, and do not necessarily require or imply those entities or operations There is no such actual relationship or order between them. Moreover, the terms "comprising", "comprising" or any other variation thereof are intended to encompass non-exclusive inclusion.

实施例1Example 1

本实施例主要介绍一种基于动态图卷积和注意力机制的点云补全方法。整体模型架构图请参考图1,图1为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的结合图卷积和注意力机制的深度学习模型架构。This embodiment mainly introduces a point cloud completion method based on dynamic graph convolution and attention mechanism. Please refer to FIG. 1 for the overall model architecture diagram. FIG. 1 is a deep learning model architecture combining graph convolution and attention mechanism of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention.

一种基于动态图卷积和注意力机制的点云补全方法,其包括:A point cloud completion method based on dynamic graph convolution and attention mechanism, which includes:

S1、特征提取;S1. Feature extraction;

S2、特征聚合;S2, feature aggregation;

S3、特征补全与重建。S3. Feature completion and reconstruction.

进一步的,所述S1步骤中特征提取方法包括:Further, the feature extraction method in the step S1 includes:

S21、定义邻域;S21, define a neighborhood;

S22、更新动态邻域图;S22, update the dynamic neighborhood graph;

S23、密集连接。S23, dense connection.

进一步的,所述定义邻域和更新动态邻域图方法具体为使用最近邻算法k-NN在特征空间中构建每个点的局部图,以聚合非局部和结构感知的上下文特征;对于点云中的每个点xi,根据它们在特征空间中的接近度选择k个邻居节点xj={x1,x2,…,xk},结合特征嵌入xi和xj-xi得到边缘特征eij,可以表示为:Further, the method for defining neighborhoods and updating dynamic neighborhood graphs is specifically to use the nearest neighbor algorithm k-NN to construct a local graph of each point in the feature space to aggregate non-local and structure-aware contextual features; for point clouds For each point x i in , select k neighbor nodes x j ={x 1 ,x 2 ,...,x k } according to their proximity in the feature space, and combine the feature embeddings x i and x j -xi to get The edge feature e ij can be expressed as:

其中Ni表示点i的邻域,表示连接操作。where Ni represents the neighborhood of point i , Represents a connect operation.

进一步的,所述密集连接方法具体为使用动态图卷积模块,具体请参考图2,图2为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的动态图卷积示意图。Further, the dense connection method specifically uses a dynamic graph convolution module, please refer to FIG. 2 for details. FIG. 2 is a dynamic graph of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention. Schematic diagram of convolution.

将输入特征转换为具有较小特征维数的新特征嵌入,并通过转换后的特征动态构建邻域图;然后将构建的邻域图的边缘特征传递给共享多层感知机层;为了保留低维层的信息,将每一层的输出作为后续所有层的输入,表示为:Transform the input features into new feature embeddings with smaller feature dimensions, and dynamically construct a neighborhood graph from the transformed features; then pass the edge features of the constructed neighborhood graph to the shared multilayer perceptron layer; in order to preserve low The information of the dimension layer, the output of each layer is used as the input of all subsequent layers, which is expressed as:

其中是第l层点xi的输出特征,是第l-1层点xi的输出特征,hθ(·)表示共享多层感知机层,表示连接操作;最后,我们使用最大池化来获取在每个局部图中具有排列不变性的聚合局部特征;in is the output feature of the point xi of the lth layer, is the output feature of the point x i of the l-1th layer, h θ ( ) represents the shared multi-layer perceptron layer, represents the join operation; finally, we use max pooling to obtain aggregated local features with permutation invariance in each local graph;

在子单元之外,每个子单元的输出也通过跳跃连接操作传递给后续每个子单元作为其输入,可以记为:In addition to the subunits, the output of each subunit is also passed to each subsequent subunit as its input through the skip connection operation, which can be written as:

其中Fi表示第i个子单元的输出特征,Ei(·)表示第i个子单元,Fin表示输入特征,表示连接操作。where F i represents the output feature of the ith subunit, E i ( ) represents the ith subunit, F in represents the input feature, Represents a connect operation.

进一步的,所述S2步骤中特征聚合方法为:注意力池化和最大池化结合提取全局特征,并与逐点局部特征Fp聚合生成Fa。Further, the feature aggregation method in the step S2 is as follows: attention pooling and max pooling are combined to extract global features, and aggregated with point-by-point local features F p to generate F a .

进一步的,所述特征聚合为将逐点特征Fp和全局特征聚合作为下一阶段的输入,生成细粒度的形状的同时保留输入点云的原始特征。Further, the feature aggregation is to use the point-by-point feature F p and the global feature aggregation as the input of the next stage to generate fine-grained shapes while retaining the original features of the input point cloud.

进一步的,所述注意力池化方法为计算输入特征的每个元素的注意力得分ai:Further, the attention pooling method is to calculate the attention score a i of each element of the input feature:

其中FC(·)表示全连接层,表示第i个点的输入特征;where FC( ) represents the fully connected layer, Represents the input feature of the i-th point;

然后将每个元素乘以相应的分数并相加得到聚合特征fout表示为:Each element is then multiplied by the corresponding score and added to get the aggregated feature fout expressed as:

其中fout表示输出特征,hθ(·)表示共享多层感知机层。where f out represents the output features and h θ ( ) represents the shared multilayer perceptron layer.

进一步的,所述S3步骤中特征补全与重建方法为:使用特征补全模块对特征进行扩展和细化,并以此重建完整点云的坐标。具体请参考图3,图3为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的特征补全与坐标重建示意图。Further, the feature completion and reconstruction method in the S3 step is: using the feature completion module to expand and refine the features, and reconstruct the coordinates of the complete point cloud. Please refer to FIG. 3 for details. FIG. 3 is a schematic diagram of feature completion and coordinate reconstruction of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention.

进一步的,所述特征补全模块为层次残差结构。Further, the feature completion module is a hierarchical residual structure.

进一步的,所述S3步骤中特征补全与重建方法:Further, the feature completion and reconstruction method in the S3 step:

S101、首先通过共享多层感知机得到大小为N×C的特征矩阵L0,C表示特征维度,N表示个数;S101. First, a feature matrix L 0 with a size of N×C is obtained by sharing a multi-layer perceptron, where C represents the feature dimension, and N represents the number;

S102、然后对变换后的特征矩阵复制r倍以获得新的特征图;S102, then copy r times to the transformed feature matrix to obtain a new feature map;

S103、生成r个不同的二维网格,包含m个网格点,然后将其扩展为重复特征的大小,将网格点的坐标与重复特征进行拼接;S103, generating r different two-dimensional grids, including m grid points, and then expanding them to the size of the repeated features, and splicing the coordinates of the grid points with the repeated features;

S104、通过自注意力机制单元和共享多层感知机得到大小为rN3×C的扩张特征矩阵H0;S104, obtaining an expanded feature matrix H 0 with a size of rN 3 ×C through the self-attention mechanism unit and the shared multilayer perceptron;

S105、对特征H0进行重新塑形,恢复到与L0维度相同的特征矩阵L1,对L1和L0作差,得到残差△L;S105, reshape the feature H 0 to restore the feature matrix L 1 with the same dimension as L 0 , and make a difference between L 1 and L 0 to obtain the residual ΔL;

S106、将△L再通过S102-S104的步骤获得扩张残差特征H1,最终将H1与H0相加得到扩张特征Hout;S106, obtaining the expanded residual feature H 1 through the steps of S102-S104 by ΔL, and finally adding H 1 and H 0 to obtain the expanded feature H out ;

S107、最后通过参数为[C,128,64,3]的共享多层感知机得到大小为rN3×3的完整点云。S107. Finally, a complete point cloud with a size of rN 3 × 3 is obtained through a shared multilayer perceptron with parameters [C, 128, 64, 3].

本申请在特征空间中定义每个点的邻域,即根据特征图的相似性来寻找邻近点,并且在每一层后动态更新邻域图,来获取结构与内容感知的上下文信息。The present application defines the neighborhood of each point in the feature space, that is, finds adjacent points according to the similarity of the feature maps, and dynamically updates the neighborhood map after each layer to obtain the context information of structure and content awareness.

本申请在使用最大池化的同时,结合了注意力池化,用于关注一些特定的显著结构信息,并提出联合最大池化和注意力池化操作的多通道方式,进而提取信息更加丰富的全局几何特征。In this application, while using maximum pooling, it combines attention pooling to focus on some specific salient structural information, and proposes a multi-channel method of combining maximum pooling and attention pooling operations, so as to extract richer information. Global geometric features.

本申请提出了结合图卷积和注意力机制的轻量级点云补全网络,高效生成局部缺失点云的补全结果,最大程度地保留和恢复了输入点云的细节和几何结构。This application proposes a lightweight point cloud completion network combining graph convolution and attention mechanisms, which efficiently generates the completion results of locally missing point clouds, and preserves and restores the details and geometric structure of the input point cloud to the greatest extent.

本申请提出了一个一阶段模式的网络模型,并非使用多步骤的补全策略,能够直接输出完整的点云,与以往的方法相比,我们的方法并非通过直接解码经池化操作压缩后的特征嵌入来生成完整点云,而是融合逐点特征和全局几何特征,在特征空间对点云进行补全,并对特征进行扩张与细化,以此重建完整点云。This application proposes a network model of one-stage mode, instead of using a multi-step completion strategy, it can directly output a complete point cloud. Compared with the previous methods, our method does not directly decode the compressed result of the pooling operation. Feature embedding is used to generate a complete point cloud, but point-by-point features and global geometric features are fused, the point cloud is completed in the feature space, and the features are expanded and refined to reconstruct the complete point cloud.

实施例2Example 2

基于上述实施例1,本实施例主要介绍一种基于动态图卷积和注意力机制的点云补全方法的验证过程中的网络训练模型。Based on Embodiment 1 above, this embodiment mainly introduces a network training model in the verification process of a point cloud completion method based on dynamic graph convolution and attention mechanism.

首先建立网络模型超参数:模型使用Pytorch来实现。网络模型都使用Adam优化器进行训练,并设置β1=0.9,β2=0.999,生成器的初始学习率为5e-4,判别器的初始学习率为1e-5,每40个epoch衰减0.7。训练时的批次大小设为32,我们的网络大约在训练到150epochs处收敛。First establish the network model hyperparameters: The model is implemented using Pytorch. The network models are all trained with the Adam optimizer, and set β 1 =0.9, β 2 =0.999, the initial learning rate of the generator is 5e-4, the initial learning rate of the discriminator is 1e-5, and the decay is 0.7 every 40 epochs . With a batch size of 32 during training, our network converges around 150 epochs of training.

其次建立损失函数:本文的总体损失函数由两部分组成,其中补全损失(Lc)用来保证输出点云向真值点云靠近,均匀化损失(Lu)约束网络输出分布均匀的点云。具体定义如下:本文选择ChamferDistance(公式8)作为补全损失函数,将网络输出的完整点云Q与对应的真值点云Qgt计算相应CD值。Secondly, the loss function is established: the overall loss function in this paper consists of two parts, in which the completion loss (L c ) is used to ensure that the output point cloud is close to the true point cloud, and the uniform loss (L u ) constrains the network to output points with uniform distribution cloud. The specific definition is as follows: In this paper, ChamferDistance (Formula 8) is selected as the completion loss function, and the corresponding CD value is calculated from the complete point cloud Q output by the network and the corresponding ground-truth point cloud Qgt .

为了输出分布均匀的点云,本文引入均匀化损失(公式9)。其中Uimbalance是对局部邻域内点的数量进行约束,Uclutter是对局部邻域内点的几何分布进行约束。其中Sj(j=1,…,M)表示一个点子集,每个子集是利用半径为rd的球形查询采样得到的;是Sj子集中点的期望数量;表示点与邻域点之间期望的距离;该公式基于Sj是平面的并且相邻点是六边形的假设推导出来的。In order to output a uniformly distributed point cloud, this paper introduces a homogenization loss (Equation 9). Among them, U imbalance is to constrain the number of points in the local neighborhood, and U clutter is to constrain the geometric distribution of points in the local neighborhood. where S j (j=1,...,M) represents a subset of points, each subset is obtained by sampling a spherical query with radius r d ; is the expected number of points in the subset of S j ; represents the desired distance between a point and a neighbor point; the formula is derived on the assumption that S j is planar and the neighbor points are hexagonal.

总体损失函数是以上两个损失函数的加权总和。The overall loss function is the weighted sum of the above two loss functions.

其中β、γ是对应损失函数的权重值:where β and γ are the weight values of the corresponding loss function:

L=βLc+γLuni. (8)L=βL c +γL uni . (8)

最好建立训练数据集:我们在MVP数据集(Multi-View Partial Point CloudDataset)上进行了实验。MVP数据集来源于Pan等人(2021)的研究,具有高质量的多视图局部缺失点云。它包含16个类别的物体,训练集中共有62,400组数据,测试集中共有41,600组数据。MVP数据集提供了2048点的输入点云和不同分辨率的完整点云,包括2048、4096、8192和16384个点的完整点云,用于在不同分辨率下评估补全的质量。It is best to build a training dataset: We conduct experiments on the MVP dataset (Multi-View Partial Point CloudDataset). The MVP dataset is derived from the work of Pan et al. (2021) with high-quality multi-view locally missing point clouds. It contains 16 categories of objects, with a total of 62,400 sets of data in the training set and 41,600 sets of data in the test set. The MVP dataset provides input point clouds of 2048 points and full point clouds of different resolutions, including full point clouds of 2048, 4096, 8192 and 16384 points, for evaluating the quality of completion at different resolutions.

实施例3Example 3

基于上述实施例1-2,本实施例主要介绍一种基于动态图卷积和注意力机制的点云补全方法的验证过程中的网络训练模型测试。Based on the foregoing Embodiments 1-2, this embodiment mainly introduces a network training model test in the verification process of a point cloud completion method based on dynamic graph convolution and attention mechanism.

建立模型评价参数:通过计算预测点云Q与真值点云Qgt之间的Chamfer Distance(CD)来评估模型补全的精度,CD值越小说明模型的补全效果越好。Establish model evaluation parameters: Evaluate the accuracy of model completion by calculating the Chamfer Distance (CD) between the predicted point cloud Q and the ground truth point cloud Q gt . The smaller the CD value, the better the model completion effect.

模型测试结果:在MVP数据集的测试集上测试了我们的模型的补全效果,并在相同环境下与以下方法进行了对比:Model test results: The completion effect of our model is tested on the test set of the MVP dataset and compared with the following methods under the same environment:

1、PCN(Yuan et al.,2018),以由粗到细的模式生成完整点云,利用两个堆叠的共享多层感知机层作为编码器提取全局特征,结合基于全连接的解码器和基于折叠操作的解码器生成稠密完整点云;1. PCN (Yuan et al., 2018), which generates a complete point cloud in a coarse-to-fine pattern, uses two stacked shared MLP layers as encoders to extract global features, combines a fully connected-based decoder and The decoder based on the folding operation generates dense and complete point clouds;

2、MSN(Liu et al.,2019)也以两阶段的模型完成补全任务,第一阶段生成物体表面分块的集合,第二阶段将输入点云与粗略预测点云融合,并提出最低密度采样对融合的点云进行采样得到分布均匀的点云,再通过一个残差网络对点云细化得到最终完整点云;2. MSN (Liu et al., 2019) also completes the completion task with a two-stage model. The first stage generates a set of object surface blocks, and the second stage fuses the input point cloud with the roughly predicted point cloud, and proposes the lowest Density sampling samples the fused point cloud to obtain a uniformly distributed point cloud, and then refines the point cloud through a residual network to obtain the final complete point cloud;

3、CRN(Wang et al.,2020)利用级联细化策略以由粗到细的方式在局部和全局细化点的位置,并设计了一个分块鉴别器,利用对抗性训练来进一步保证每个局部都是真实的;3. CRN (Wang et al., 2020) utilizes a cascade refinement strategy to refine the location of points locally and globally in a coarse-to-fine manner, and designs a block discriminator that utilizes adversarial training to further ensure every part is real;

4、ECG(Pan,2020)在细化阶段利用图卷积传播多尺度的边缘特征信息来达到保留局部几何细节的目的;4. ECG (Pan, 2020) uses graph convolution to propagate multi-scale edge feature information in the refinement stage to achieve the purpose of preserving local geometric details;

5、VRCNet(Pan et al.,2021)提出的变分关系网络在特征提取阶段采取双平行路径模式,分别输入残缺点云与对应完整点云,并通过一定约束条件使从残缺点云提取的特征信息与完整点云尽可能相似,以得到更加丰富的特征信息。5. The variational relational network proposed by VRCNet (Pan et al., 2021) adopts a dual-parallel path mode in the feature extraction stage, inputs the residual defect cloud and the corresponding complete point cloud respectively, and makes the extracted data from the residual defect cloud through certain constraints. The feature information is as similar as possible to the full point cloud to get richer feature information.

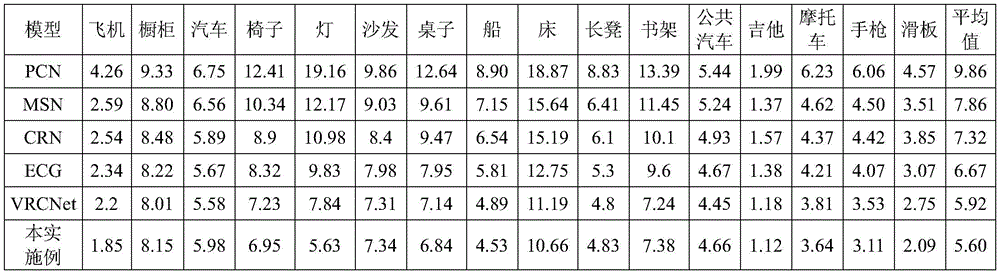

在MVP数据集上测试的定量与定性结果如表1和图4所示,图4为本发明提供的一种基于动态图卷积和注意力机制的点云补全方法的效果对比图。通过图4可以直接的看出Ours的点云补全结果是最好的,最接近原点云结构的。The quantitative and qualitative results tested on the MVP data set are shown in Table 1 and Figure 4. Figure 4 is a comparison diagram of the effect of a point cloud completion method based on dynamic graph convolution and attention mechanism provided by the present invention. From Figure 4, it can be directly seen that the point cloud completion result of Ours is the best and the closest to the original point cloud structure.

表1在MVP测试数据集上的点云补全结果,表示为各模型在各类别物体上取得的CD值(×104)Table 1. Point cloud completion results on the MVP test data set, expressed as the CD value (×10 4 ) obtained by each model on each category of objects

从对比结果可以看出我们方法取得了最小的CD值,并且优势在于能够最大化恢复输入点云的原有结构,并且利用已知点云的几何特征学习相似的结构信息对缺失部分补全,能够得到较为真实的补全结果。From the comparison results, it can be seen that our method achieves the smallest CD value, and the advantage is that it can maximize the restoration of the original structure of the input point cloud, and use the geometric features of the known point cloud to learn similar structural information to complete the missing parts. more realistic results can be obtained.

以上所述仅为本发明的优选实施例而已,其并非因此限制本发明的保护范围,对于本领域的技术人员来说,本发明可以有各种更改和变化。凡在本发明的精神和原则之内,通过常规的替代或者能够实现相同的功能在不脱离本发明的原理和精神的情况下对这些实施例进行变化、修改、替换、整合和参数变更均落入本发明的保护范围内。The above descriptions are only preferred embodiments of the present invention, which are not intended to limit the protection scope of the present invention. For those skilled in the art, the present invention may have various modifications and changes. Any changes, modifications, substitutions, integrations and parameter changes to these embodiments without departing from the principles and spirit of the present invention, through conventional substitutions or capable of achieving the same function within the spirit and principles of the present invention, all fall within the scope of the present invention. into the protection scope of the present invention.

Claims (10)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210315804.5A CN114693873B (en) | 2022-03-29 | 2022-03-29 | A point cloud completion method based on dynamic graph convolution and attention mechanism |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210315804.5A CN114693873B (en) | 2022-03-29 | 2022-03-29 | A point cloud completion method based on dynamic graph convolution and attention mechanism |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN114693873A true CN114693873A (en) | 2022-07-01 |

| CN114693873B CN114693873B (en) | 2025-07-04 |

Family

ID=82140102

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202210315804.5A Active CN114693873B (en) | 2022-03-29 | 2022-03-29 | A point cloud completion method based on dynamic graph convolution and attention mechanism |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN114693873B (en) |

Cited By (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US11488283B1 (en) * | 2021-11-30 | 2022-11-01 | Huazhong University Of Science And Technology | Point cloud reconstruction method and apparatus based on pyramid transformer, device, and medium |

| CN115512201A (en) * | 2022-09-26 | 2022-12-23 | 重庆大学 | Point cloud feature extraction method, system and framework based on residual natural attention |

| CN116188882A (en) * | 2023-03-06 | 2023-05-30 | 云南大学 | Point cloud upsampling method and system integrating self-attention and multi-path graph convolution |

| CN116188924A (en) * | 2022-12-20 | 2023-05-30 | 辽宁工程技术大学 | A point cloud completion method based on multi-scale feature extraction |

| CN116310179A (en) * | 2023-03-24 | 2023-06-23 | 斯乾(上海)科技有限公司 | Point cloud completion method, device, equipment and medium |

Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN113256543A (en) * | 2021-04-16 | 2021-08-13 | 南昌大学 | Point cloud completion method based on graph convolution neural network model |

| CN113408629A (en) * | 2021-06-22 | 2021-09-17 | 中国科学技术大学 | Multiple completion method of motor vehicle exhaust telemetering data based on time-space convolution network |

| LU500265B1 (en) * | 2020-05-19 | 2021-11-19 | Univ South China Tech | A Method of Upsampling of Point Cloud Based on Deep Learning |

| US11222217B1 (en) * | 2020-08-14 | 2022-01-11 | Tsinghua University | Detection method using fusion network based on attention mechanism, and terminal device |

| CN114004871A (en) * | 2022-01-04 | 2022-02-01 | 山东大学 | A point cloud registration method and system based on point cloud completion |

| CN114066772A (en) * | 2021-11-26 | 2022-02-18 | 南京理工大学 | A method and system for tooth point cloud completion based on transformer encoder |

-

2022

- 2022-03-29 CN CN202210315804.5A patent/CN114693873B/en active Active

Patent Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| LU500265B1 (en) * | 2020-05-19 | 2021-11-19 | Univ South China Tech | A Method of Upsampling of Point Cloud Based on Deep Learning |

| US11222217B1 (en) * | 2020-08-14 | 2022-01-11 | Tsinghua University | Detection method using fusion network based on attention mechanism, and terminal device |

| CN113256543A (en) * | 2021-04-16 | 2021-08-13 | 南昌大学 | Point cloud completion method based on graph convolution neural network model |

| CN113408629A (en) * | 2021-06-22 | 2021-09-17 | 中国科学技术大学 | Multiple completion method of motor vehicle exhaust telemetering data based on time-space convolution network |

| CN114066772A (en) * | 2021-11-26 | 2022-02-18 | 南京理工大学 | A method and system for tooth point cloud completion based on transformer encoder |

| CN114004871A (en) * | 2022-01-04 | 2022-02-01 | 山东大学 | A point cloud registration method and system based on point cloud completion |

Non-Patent Citations (4)

| Title |

|---|

| LIANG PAN: "ECG:Edge-aware Point Cloud Completionwith Graph Convolution", 《IEEE ROBOTICS AND AUTOMATION LETTERS》, vol. 5, no. 3, 31 July 2020 (2020-07-31), pages 3 * |

| WENXIAO ZHANG ET AL: "Detail Preserved Point Cloud Completion via Separated Feature Aggregation", 2020 EUROPEAN CONFERENCE ON COMPUTER VISION (ECCV), 5 July 2020 (2020-07-05), pages 3 * |

| WENXIAO ZHANG ET AL: "PCAN: 3D Attention Map Learning Using Contextual Information for Point Cloud Based Retrieval", IEEE/CVF CONFERENCE ON COMPUTER VISION AND PATTERN RECOGNITION (CVPR), 15 June 2019 (2019-06-15), pages 1 - 3 * |

| 俞斌;董晨;刘延华;程烨;: "基于深度学习的点云分割方法综述", 计算机工程与应用, vol. 56, no. 01, 11 December 2019 (2019-12-11) * |

Cited By (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US11488283B1 (en) * | 2021-11-30 | 2022-11-01 | Huazhong University Of Science And Technology | Point cloud reconstruction method and apparatus based on pyramid transformer, device, and medium |

| CN115512201A (en) * | 2022-09-26 | 2022-12-23 | 重庆大学 | Point cloud feature extraction method, system and framework based on residual natural attention |

| CN116188924A (en) * | 2022-12-20 | 2023-05-30 | 辽宁工程技术大学 | A point cloud completion method based on multi-scale feature extraction |

| CN116188882A (en) * | 2023-03-06 | 2023-05-30 | 云南大学 | Point cloud upsampling method and system integrating self-attention and multi-path graph convolution |

| CN116310179A (en) * | 2023-03-24 | 2023-06-23 | 斯乾(上海)科技有限公司 | Point cloud completion method, device, equipment and medium |

Also Published As

| Publication number | Publication date |

|---|---|

| CN114693873B (en) | 2025-07-04 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN114693873A (en) | A point cloud completion method based on dynamic graph convolution and attention mechanism | |

| Yifan et al. | Patch-based progressive 3d point set upsampling | |

| Mao et al. | PU-Flow: A point cloud upsampling network with normalizing flows | |

| EP4481587A1 (en) | Structure data generation method and apparatus, device, medium, and program product | |

| CN112818849B (en) | Crowd density detection algorithm based on context attention convolutional neural network for countermeasure learning | |

| CN110853039B (en) | Sketch image segmentation method, system and device for multi-data fusion and storage medium | |

| CN110544297A (en) | Three-dimensional model reconstruction method for single image | |

| CN110084773A (en) | A kind of image interfusion method based on depth convolution autoencoder network | |

| Zhu et al. | Csrgan: medical image super-resolution using a generative adversarial network | |

| EP4325388A1 (en) | Machine-learning for topologically-aware cad retrieval | |

| CN113240683A (en) | Attention mechanism-based lightweight semantic segmentation model construction method | |

| CN117315241A (en) | A semantic segmentation method for scene images based on transformer structure | |

| CN117173329A (en) | A point cloud upsampling method based on reversible neural network | |

| Leng et al. | A point contextual transformer network for point cloud completion | |

| CN104036482B (en) | Facial image super-resolution method based on dictionary asymptotic updating | |

| CN117576312A (en) | Hand model construction method and device and computer equipment | |

| Zhang et al. | Efficient pooling operator for 3D morphable models | |

| Huang et al. | 3D human pose estimation with multi-scale graph convolution and hierarchical body pooling | |

| CN114373059A (en) | Intelligent garment three-dimensional modeling method based on sketch | |

| Wu et al. | 3d shape completion on unseen categories: A weakly-supervised approach | |

| Quan et al. | Deep learning for 3d point cloud enhancement: A survey | |

| Mao et al. | Dmf-net: Image-guided point cloud completion with dual-channel modality fusion and shape-aware upsampling transformer | |

| CN117078518A (en) | Three-dimensional point cloud superdivision method based on multi-mode iterative fusion | |

| CN114998104B (en) | A super-resolution image reconstruction method and system based on hierarchical learning and feature separation | |

| CN116342387A (en) | Point cloud super-resolution method, system and electronic device for multi-stage deep learning |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |