Top Software Engineering Best Practices for 2025

In the world of software development, the gap between functional code and exceptional, maintainable products is defined by process and discipline. While new tools and frameworks emerge daily, the teams that consistently deliver high-quality software rely on a solid foundation of proven principles. Chasing the latest trend is easy; building robust, scalable, and resilient systems requires a deliberate commitment to excellence. This guide is your definitive resource for the most critical software engineering best practices that elite teams use to build better products, faster.

We’ve compiled a complete collection of the top ten practices, moving beyond generic advice to provide actionable insights and practical examples. You will learn how to implement effective Git workflows, master Test-Driven Development (TDD), and integrate security from day one. We cover everything from setting up a CI/CD pipeline that automates delivery to writing clean, refactorable code that future-proofs your work.

Each section is designed for modern development teams, with a special focus on integrating AI-assisted workflows. We’ll even provide specific prompts and checklists you can use with tools like VS Code or Cursor to standardize effective development patterns. For instance, a simple prompt can generate a complete deployment checklist, ensuring no detail is missed. This approach helps developers, managers, and entire organizations standardize on practices that reduce technical debt, improve collaboration, and create a sustainable engineering culture. These aren’t just recommendations; they are the blueprint for building software that stands the test of time.

1. Version Control and Git Workflow

Version control is the bedrock of modern software engineering best practices, acting as a complete historical record and collaboration hub for your codebase. Using a system like Git, teams can track every change, experiment with new features in isolated environments called branches, and seamlessly merge contributions from multiple developers without overwriting each other’s work. It’s the safety net that allows you to confidently refactor code or revert to a stable state if something goes wrong.

This practice isn’t just for small projects; it scales to massive endeavors. Microsoft famously migrated the entire Windows codebase to Git, enabling thousands of engineers to collaborate efficiently. Similarly, the open-source React framework relies on a streamlined GitHub Flow to manage contributions from a global community.

How to Implement a Git Workflow

A structured Git workflow prevents chaos and standardizes how changes move from development to production. While several models exist (like GitFlow or Trunk-Based Development), a feature-branch workflow is a great starting point for most teams.

- Create a New Branch: For every new task, bug fix, or feature, create a descriptive branch (e.g.,

feature/user-authenticationorfix/login-bug). - Commit Often: Make small, atomic commits with clear, conventional messages (e.g.,

feat: add password reset API endpoint). This makes your change history easy to understand. - Open a Pull Request (PR): When your work is complete, open a PR to merge your feature branch into the main branch (

mainordevelop). - Review and Merge: The PR triggers automated checks (tests, linting) and allows teammates to review your code, suggest improvements, and approve the changes before they are integrated.

Adopting this structured approach ensures that your main branch always remains stable and deployable, making it a fundamental software engineering best practice.

2. Test-Driven Development (TDD)

Test-Driven Development (TDD) flips the traditional development process on its head. Instead of writing code first and tests later (if at all), TDD requires you to write a failing test before you write any production code. This “test-first” approach, guided by the simple “Red-Green-Refactor” cycle, ensures that your code is not only correct but also thoughtfully designed and inherently testable from the very beginning. It’s a discipline that forces clarity and precision.

This practice is trusted for building robust and mission-critical systems. NASA’s Jet Propulsion Laboratory uses TDD for flight software where errors can have catastrophic consequences. Similarly, companies like Spotify apply TDD principles to ensure the reliability of their backend microservices, and ThoughtWorks champions it as a core practice for building high-quality software for their clients.

How to Implement Test-Driven Development

Adopting TDD involves a rhythmic, disciplined cycle that builds momentum and confidence. It shifts the focus from “does it work?” to “how can I prove it works?” before a single line of implementation is written.

- Write a Failing Test (Red): Identify the smallest piece of required functionality. Write an automated test that defines that behavior and watch it fail. This failure is important; it proves the test works and that the feature doesn’t exist yet.

- Write Minimal Code (Green): Write the simplest, most straightforward code possible to make the test pass. The goal here is not elegance or optimization but simply to get a passing result.

- Refactor the Code: With the safety of a passing test, you can now clean up your code. Improve its structure, remove duplication, and enhance readability without changing its external behavior. The tests ensure you don’t break anything.

By following this loop, you build a comprehensive suite of tests that act as living documentation and a safety net for future changes, making it an essential software engineering best practice.

3. Code Review and Pair Programming

No code should be a solo performance. Code review and pair programming are two collaborative software engineering best practices designed to improve code quality, share knowledge, and catch defects early. Code review involves peers systematically examining source code before it’s merged, while pair programming is a real-time collaboration where two developers work on the same task at one workstation. Both practices foster a culture of collective ownership and continuous learning.

These practices are battle-tested in the industry. Google famously requires peer review for nearly every change, a policy that reinforces high engineering standards. Similarly, companies like Pivotal Labs and Thoughtworks have built their reputations on Extreme Programming (XP) practices, with pair programming at their core. The underlying principle is simple: more eyes on the code lead to a more robust and maintainable final product.

How to Implement Code Reviews and Pairing

Integrating these collaborative practices requires clear guidelines to be effective. The goal is to make feedback constructive and the process efficient, not to create bottlenecks. For a modern team, this means combining asynchronous reviews with strategic real-time pairing.

- Keep Pull Requests Small: Aim for PRs under 400 lines of code. Small, focused changes are easier and faster to review, leading to higher-quality feedback.

- Automate the Obvious: Use linters and automated formatters (like Prettier or Black) to handle stylistic debates. The human review process should focus on logic, architecture, and potential bugs.

- Provide Constructive, Impersonal Feedback: Frame comments as suggestions or questions about the code, not criticisms of the author. Explain the “why” behind your suggestions. For security-focused feedback, follow a structured process. You can learn more about how to run effective secure code reviews.

- Use Pair Programming Strategically: Pair on complex problems, onboarding a new team member, or critical bug fixes. Rotate the “driver” (who writes code) and “navigator” (who strategizes) roles frequently to keep both developers engaged.

4. Continuous Integration and Continuous Deployment (CI/CD)

Continuous Integration and Continuous Deployment (CI/CD) automates the software release process, enabling teams to deliver code changes more frequently and reliably. This practice is the engine of modern DevOps, bridging the gap between development and operations. Continuous Integration (CI) automatically builds and tests code every time a change is committed, while Continuous Deployment (CD) extends this by automatically deploying every validated change to production.

This automation is a cornerstone of high-performing software engineering best practices. Amazon famously deploys new code every 11.7 seconds using CI/CD pipelines, and Netflix deploys thousands of times per day. These systems provide rapid feedback, reduce manual errors, and accelerate the delivery of valuable features and fixes to users, making them essential for teams that need to innovate quickly and maintain stability.

How to Implement a CI/CD Pipeline

A CI/CD pipeline automates the steps required to get your code from version control into the hands of users. Starting with CI is a practical first step before moving to full CD.

- Start with Continuous Integration: Set up a build server (like Jenkins, GitHub Actions, or GitLab CI) to automatically build and run unit tests on every commit to your main or feature branches.

- Keep Builds and Tests Fast: Optimize your pipeline to provide feedback in under 10 minutes. A slow pipeline becomes a bottleneck that developers start to ignore.

- Automate Deployment: Once CI is stable, extend the pipeline to automatically deploy validated builds to a staging environment and then to production.

- Use Feature Flags: Decouple deployment from release. Use feature flags to safely push code to production while keeping new features hidden until they are ready, reducing the risk of big-bang releases.

This infographic illustrates a simplified CI/CD process flow from code commit to production deployment.

The visualization shows how each developer’s commit triggers a series of automated quality gates, ensuring that only validated code reaches end-users.

5. Agile and Iterative Development

Agile is a flexible, iterative approach to software development that prioritizes collaboration, customer feedback, and adaptive planning. Instead of following rigid, sequential phases, Agile methodologies break down large projects into small, manageable iterations called sprints. This focus on incremental delivery allows teams to ship working software frequently, gather real-world feedback, and adapt to changing requirements with speed and precision.

This practice has been a game-changer for companies of all sizes. Spotify famously developed its own scaling model with “Squads” and “Tribes” to maintain agility, while ING Bank transformed its entire 3,500-person organization to an Agile model. Even the FBI’s Sentinel project, a massive government initiative, was successfully recovered from failure after the team switched to an Agile methodology. These examples prove that Agile is a cornerstone of modern software engineering best practices.

How to Implement an Agile Framework

Adopting an Agile framework like Scrum provides the structure needed to deliver value continuously. It introduces clear roles, ceremonies, and artifacts that keep the team aligned and focused.

- Define Roles: Establish a Product Owner (defines what to build), a Scrum Master (facilitates the process), and the Development Team (builds the product).

- Plan Your Sprint: Work in fixed-length iterations (usually 2-4 weeks). Begin each with a planning session to select a realistic amount of work from the backlog. For a deeper dive into this crucial step, explore our guide on effective Agile development sprint planning.

- Hold Daily Stand-ups: Conduct short, daily meetings where each team member shares progress, plans for the day, and identifies any blockers.

- Review and Retrospect: At the end of each sprint, hold a Sprint Review to demonstrate the completed work to stakeholders and a Sprint Retrospective for the team to reflect on what went well and what can be improved.

Embracing this cycle of planning, executing, and reflecting ensures your team consistently improves and delivers software that truly meets user needs.

6. Clean Code and Refactoring

Writing code that merely works is only the first step; making it understandable, maintainable, and adaptable is what separates professional software engineering from simple programming. Clean code is the practice of writing code that is clear, simple, and readable to other humans. It’s paired with refactoring, the disciplined process of restructuring existing code to improve its internal design without changing its external behavior, effectively managing technical debt.

This principle is fundamental to long-term project success. For instance, the Linux kernel maintains its incredible stability and performance across decades of contributions thanks to strict coding standards. Similarly, tech giants like Google and Airbnb publish extensive style guides that have become industry benchmarks, ensuring that their massive codebases remain manageable and consistent for thousands of engineers.

How to Implement Clean Code and Refactoring

Integrating clean code and continuous refactoring requires a shift in mindset, viewing code quality as a feature, not an afterthought. These software engineering best practices ensure your system remains healthy and easy to modify.

- Follow the “Boy Scout Rule”: Always leave the code a little cleaner than you found it. This could be as simple as renaming a confusing variable or breaking up a long function during a bug fix.

- Use Meaningful Names: Variables, functions, and classes should clearly state their purpose. Avoid cryptic abbreviations and choose names that are descriptive and pronounceable, like

fetchUserProfileinstead ofgetUsrDat. - Keep Functions Small and Focused: Each function should do one thing and do it well. Aim to keep functions short, ideally under 20 lines, and use early returns to avoid deep nesting of

if/elsestatements. - Refactor with a Safety Net: Before restructuring code, ensure you have a solid suite of tests in place. This allows you to make changes confidently, knowing the tests will catch any regressions in functionality.

- Leverage Automated Tools: Use linters (like ESLint) and code formatters (like Prettier) to automatically enforce style consistency across the entire team, eliminating debates over syntax.

7. Automated Testing Strategy

An automated testing strategy is the proactive process of building a safety net for your software, ensuring that new features don’t break existing ones and that the application behaves as expected. By writing code that tests your code, you can catch bugs early, refactor with confidence, and automate quality assurance. This approach moves testing from a manual, time-consuming phase to an integrated, continuous part of development, providing rapid feedback.

This practice is essential for maintaining high-quality software at scale. Google’s engineering culture is built on a massive automated testing infrastructure that runs over 150 million tests daily. Similarly, Netflix famously employs “Chaos Monkey,” an automated tool that randomly disables production instances to test system resilience, proving the value of automation even in the most complex environments.

How to Implement an Automated Testing Strategy

A robust strategy relies on a multi-layered approach, often visualized as the “Testing Pyramid,” which balances test speed, cost, and scope. This model, popularized by Mike Cohn, helps teams focus their efforts effectively.

- Build a Strong Base with Unit Tests: Write many small, fast tests that verify individual functions or components in isolation. These should form the largest part of your test suite, as they run in milliseconds and pinpoint failures precisely.

- Add Integration Tests: Write fewer tests that check how multiple components work together. These are slightly slower but crucial for verifying interactions, like ensuring an API endpoint correctly retrieves data from a database.

- Use End-to-End (E2E) Tests Sparingly: Implement a minimal number of E2E tests that simulate a full user journey through the application’s UI. While valuable for catching critical workflow issues, they are slow and often brittle.

- Automate Test Execution: Integrate your test suite into your CI/CD pipeline. This ensures tests are automatically run on every commit, preventing regressions from ever reaching the main branch.

Adopting this layered approach is a core software engineering best practice that enables teams to deliver reliable features quickly and predictably.

8. Documentation and Knowledge Management

Great code explains how it works, but great documentation explains why it exists. Documentation is the practice of creating and maintaining clear, accessible information about your system’s architecture, APIs, processes, and decisions. It acts as a guide for new team members, a reference for current developers, and a historical record that prevents knowledge from being lost when people leave. This is a core software engineering best practice that ensures a project’s long-term health and maintainability.

Industry leaders demonstrate its value. Stripe’s API documentation is legendary for its clarity and usability, making a complex product easy for developers to adopt. Similarly, GitLab’s public handbook is a radical approach to transparency, documenting nearly every company process for everyone to see. These examples prove that treating documentation as a first-class product is essential for scaling knowledge and collaboration.

How to Implement Documentation and Knowledge Management

Effective documentation is integrated directly into the development lifecycle, not treated as an afterthought. It should be a living part of your codebase and team processes. You can learn more about specific techniques in these code documentation best practices.

- Document the “Why,” Not Just the “What”: Your code shows what it does. Use documentation (like comments, READMEs, and commit messages) to explain why a particular approach was chosen, what alternatives were considered, and what business logic it fulfills.

- Keep Docs Close to Code: Store documentation in the same repository as the code it describes. Markdown files like

README.mdandCONTRIBUTING.mdare easily versioned and stay in sync with code changes. - Maintain Architecture Decision Records (ADRs): For significant architectural choices (e.g., choosing a database or a microservice pattern), create a short document outlining the context, the decision, and its consequences. This prevents future debates and provides clarity for new team members.

- Automate Where Possible: Use tools like Swagger/OpenAPI for APIs or JSDoc for JavaScript to generate documentation directly from your code. This ensures accuracy and reduces the manual effort required to keep things updated.

9. Security by Design and DevSecOps

Security by Design is a proactive approach that embeds security considerations into every phase of the software development lifecycle, rather than treating it as a final, often rushed, checkpoint. This philosophy is put into practice through DevSecOps, which integrates security tools and processes directly into the CI/CD pipeline, making security a shared responsibility for the entire team. The goal is to identify and fix vulnerabilities early when they are significantly cheaper and easier to resolve.

This shift from reactive to proactive security is a cornerstone of modern software engineering best practices. Companies like Microsoft have long championed this approach with their Security Development Lifecycle (SDL), a comprehensive framework that has become an industry standard. Similarly, after a major breach in 2019, Capital One completely re-engineered its processes around a robust DevSecOps model, demonstrating how critical this practice is for protecting sensitive data.

How to Implement DevSecOps

Integrating security into your daily workflow doesn’t have to be a monumental task. It starts with automating security checks and making security thinking a natural part of development.

- Automate Security Scanning: Integrate Static Application Security Testing (SAST) and Dynamic Application Security Testing (DAST) tools directly into your CI/CD pipeline. This ensures that every code change is automatically scanned for common vulnerabilities before it reaches production.

- Manage Dependencies: Use tools like GitHub’s Dependabot or Snyk to continuously scan your project’s dependencies for known vulnerabilities. These tools can automatically create pull requests to update insecure packages.

- Conduct Threat Modeling: For any new feature or major architectural change, hold a threat modeling session. This collaborative exercise helps the team identify potential security risks, such as those outlined in the OWASP Top 10, and plan mitigations from the outset.

- Secure Your Secrets: Never hardcode API keys, passwords, or other secrets in your codebase. Use a dedicated secret management system like HashiCorp Vault or AWS Secrets Manager to securely store and inject credentials at runtime.

10. Modular Architecture and Design Patterns

A modular architecture structures software into independent, loosely-coupled components that can be developed, tested, and deployed separately. This approach, paired with established design patterns, provides a blueprint for building systems that are maintainable, scalable, and resilient. Instead of a monolithic codebase where every part is entangled, modularity allows teams to work in parallel and contains the impact of failures.

This practice is essential for complex systems. Netflix famously pioneered microservices to move away from a monolithic architecture, enabling its teams to innovate and deploy features independently at a massive scale. Similarly, Amazon’s service-oriented architecture allows for rapid innovation across its vast e-commerce platform by breaking down complex business logic into manageable, well-defined services.

How to Implement Modular Design

Adopting a modular architecture isn’t about choosing microservices by default; it’s about making deliberate choices to manage complexity. Starting with a well-structured monolith and strategically breaking it down is often a more practical path.

- Define Clear Boundaries: Use principles from Domain-Driven Design (DDD) to identify business capabilities and define clear, logical boundaries for your modules or services.

- Establish API Contracts: Define how modules communicate using strict API contracts, like an OpenAPI specification. This ensures services can evolve independently without breaking consumers.

- Apply Proven Design Patterns: Use well-known patterns (e.g., MVC, Layered Architecture, Circuit Breaker) to solve recurring problems. Don’t chase trends; choose patterns that fit your specific challenges.

- Document Architectural Decisions: Use Architectural Decision Records (ADRs) to document significant choices, their context, and their consequences. This provides crucial clarity for future development.

Top 10 Software Engineering Practices Comparison

| Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Version Control and Git Workflow | Medium to High due to branching, merging, and tooling | Moderate: Requires understanding Git tools & workflows | Reliable code history, collaboration, rollback capability | Team collaboration, parallel development, code review | Strong collaboration, safety net, industry standard |

| Test-Driven Development (TDD) | High: Requires writing tests before code, discipline | High upfront time investment, continuous testing effort | Higher code quality, fewer bugs, living documentation | Projects needing high reliability, complex logic | Significant bug reduction, better design, confidence |

| Code Review and Pair Programming | Medium: Requires process changes and time allocation | Moderate: Multiple developers involved simultaneously | Improved code quality, knowledge sharing, fewer defects | Knowledge transfer, early defect detection, team cohesion | Early bug detection, consistent standards, team collaboration |

| Continuous Integration / Deployment (CI/CD) | High: Setup of automated pipelines & infrastructure | High: Need for automation tooling, tests, and monitoring | Faster delivery, early integration issue detection | Rapid release cycles, safer deployments, scalable apps | Early feedback, reduced manual errors, faster time-to-market |

| Agile and Iterative Development | Medium: Requires cultural change and iterative planning | Moderate: Team involvement, tools for sprints & backlog | Increased adaptability, frequent delivery, stakeholder engagement | Projects with evolving requirements and need for flexibility | Quick adaptation, early value delivery, improved collaboration |

| Clean Code and Refactoring | Medium: Ongoing discipline and agreed standards | Moderate: Time for continuous improvements | Maintainable, readable code, reduced technical debt | Long-term projects needing maintainability | Easier maintenance, fewer bugs, improved productivity |

| Automated Testing Strategy | High: Requires extensive automated test creation | High: Tools and maintenance of diverse test suites | Rapid regression detection, higher software reliability | Ensuring code stability, supporting CI/CD pipelines | Immediate bug detection, reduces manual testing, scalable quality |

| Documentation and Knowledge Management | Medium: Requires consistent effort and process | Moderate: Time for writing & updating docs, tools | Faster onboarding, preserved knowledge, better collaboration | Distributed teams, complex systems needing clarity | Reduces onboarding time, preserves knowledge, improves communication |

| Security by Design and DevSecOps | High: Embedding security early, integrating tools | High: Security expertise, tooling, team training | Reduced vulnerabilities, regulatory compliance | Security-critical applications, regulated industries | Early vulnerability detection, compliance, safer releases |

| Modular Architecture and Design Patterns | High: Requires careful design and domain modeling | High: Infrastructure and expertise for modular systems | Scalable, maintainable, resilient software | Large/complex systems needing scalability and flexibility | Parallel development, fault isolation, proven design solutions |

Integrating These Practices into Your Workflow

We’ve explored a comprehensive landscape of software engineering best practices, from the foundational principles of version control and clean code to the modern necessities of CI/CD, DevSecOps, and AI-assisted development. Adopting these practices isn’t about flipping a switch; it’s a gradual, intentional process of cultural and technical evolution. The goal is not perfection overnight, but consistent, incremental improvement that builds momentum over time.

Think of this journey as refactoring your team’s entire workflow. Just as you refactor code to improve its structure and readability, you can systematically refine your processes to enhance collaboration, quality, and velocity. The key is to avoid the overwhelming “big bang” approach. Instead, identify the areas with the most friction and start there.

Your Actionable Starting Points

To move from theory to practice, choose one or two high-impact areas to focus on first. A small, successful change can create the buy-in needed for broader adoption.

- For Under-Documented Projects: Start with Documentation and Knowledge Management. Mandate that every new feature PR must include updates to the

README.mdor relevant wiki pages. - For Teams with High Bug Counts: Implement Test-Driven Development (TDD) for a single, critical module. Track the defect rate for that module over the next quarter to measure the impact.

- For Slow, Manual Deployments: Set up a basic CI/CD pipeline. Even a simple automated build-and-test workflow triggered on every commit can save dozens of hours per month.

- For Inconsistent Codebases: Introduce automated linters and formatters as part of your Clean Code initiative. Make them a required check in your CI pipeline to enforce standards without manual effort.

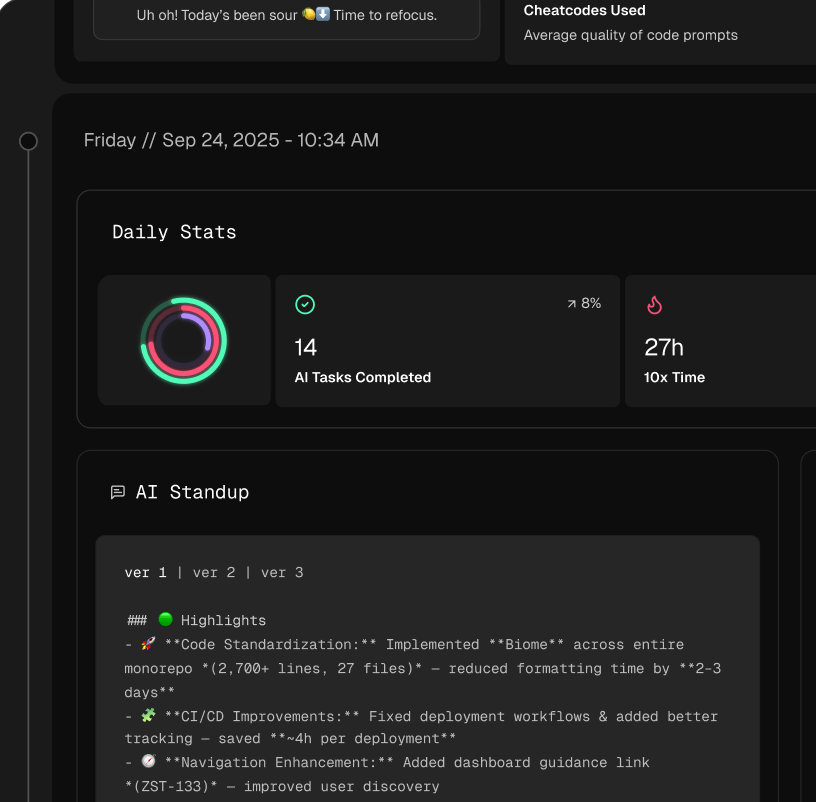

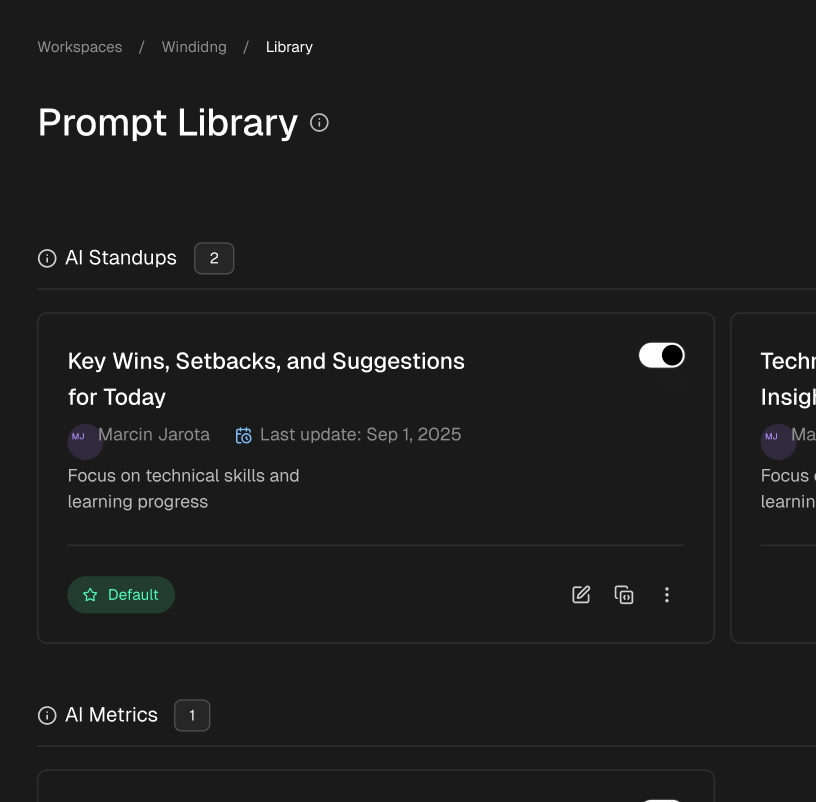

Embracing the Future: AI in Your Workflow

As we’ve highlighted, the modern definition of software engineering best practices now includes leveraging AI effectively. Your team is likely already experimenting with tools like GitHub Copilot or using AI assistants in IDEs like Cursor. The next frontier is moving from individual, ad-hoc usage to a standardized, team-wide strategy.

This is where prompt engineering becomes a critical skill. Crafting precise, context-aware prompts transforms AI from a simple code generator into a powerful diagnostic and verification tool. To make this tangible, consider standardizing checklists for common tasks, just like the Astro deployment example we shared.

Key Takeaway: The most effective engineering teams don’t just adopt tools; they build repeatable, shareable systems around them. For AI, this means creating a repository of proven prompts and workflows that elevate the entire team’s output.

Practical Example: Astro → Cloudflare Workers Deployment Checklist

✅ Prompt

“Checklist: confirm my Astro site is deployed on Cloudflare Workers with best-in-class performance, SEO, and DX. Verify adapter, asset storage, caching, hydration, and SSR/SSG choices. Give me a clear yes/no on each item and point out fixes if something’s missing.”

📝 Example Response

Astro on Cloudflare Workers — Deployment Checklist

- Adapter → Using

@astrojs/cloudflareor@astrojs/cloudflare/worker? → ✅ Yes - Static vs SSR → Static pages prebuilt + only SSR where required (API, auth, etc.) → ✅ Yes

- Cold start avoidance → Worker bundle < 1MB, no heavy deps → ✅ Yes

- Assets → Offloaded to R2 / Cloudflare Images, not bundled in Worker → ❌ No → Fix: move images to R2 and rewrite URLs

- Caching → Edge cache headers set (

Cache-Control,stale-while-revalidate) → ✅ Yes - SEO → Title/meta tags verified, hreflang (if multilingual), robots.txt and sitemap.xml generated → ✅ Yes

- Core Web Vitals → Partial hydration configured, Lighthouse score >90 → ✅ Yes

- Global Edge → Tested from multiple PoPs (US/EU/Asia) for <100ms TTFB → ✅ Yes

- Limits → Worker within 30ms CPU budget, script <10MB → ✅ Yes

- Monitoring → Wrangler tail + Analytics enabled → ✅ Yes

Result: 9/10 checks passed. Only asset storage needs fixing.

Ready to turn individual AI experiments into team-wide best practices? Zest helps your organization capture, share, and measure the impact of AI-assisted workflows. Stop letting valuable prompts and techniques disappear into private chats—standardize what works and accelerate your entire team. Discover how Zest can optimize your AI adoption

Gallery